How A Manhattan Project Veteran Returns To The Central Question In Modern Science Eighty Years Later

By James Hall

Coauthor of the popular The Sword of Damocles: Our Nuclear Age, now on Audible, Kindle and Amazon books.

jameshall042999@gmail.com

Today the deepest questions in modern science address the question of “Is the universe built on a kind of code, and could AI eventually understand it?”

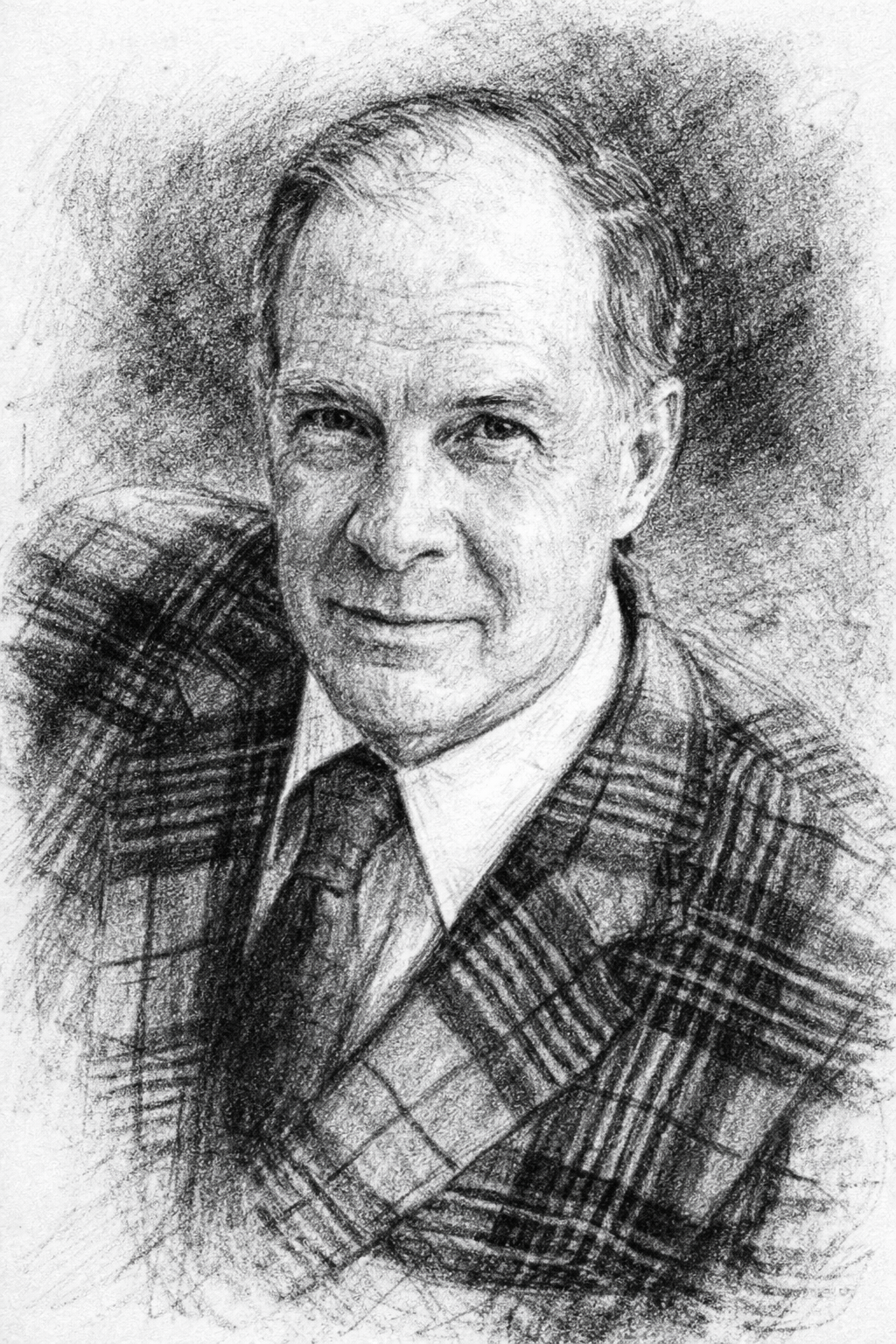

This isn’t just philosophy. It sits right at the intersection of information theory, physics, and computation. When people talk about the universe being “mathematical,” it can sound abstract or mystical. But the idea becomes much more concrete when you look at the life and work of someone like Dr. Richard Hamming, a mathematician whose ideas quietly shaped the modern world. Hamming wasn’t just a theorist. He worked on the Manhattan Project during World War II, helping solve the enormous computational problems behind early nuclear physics.¹

After the war, he joined Bell Labs, where he faced a very different challenge which was how to send information reliably through noisy telephone lines. Hamming’s most influential work focused on what were then called “calculating machines,” early forms of digital computers. These machines processed information as sequences of 0s and 1s. John Tukey, another Manhattan Project veteran, coined the term “bit” for these units of information.²

In this early digital age 0s and 1s were still the basic units of code. And like today a single flipped bit—a 0 turning into a 1 for example—could ruin an entire message. Hamming refused to accept that.

He therefore became committed to inventing error‑correcting codes. Hamming proceeded to make these clever mathematical patterns that allow a system to detect and fix mistakes automatically. He developed an algorithm for inserting error‑correcting bits, now known as the Hamming Code.³ These codes remain invaluable today and underpin the functioning of modern telecommunications and even our Internet browsers. Formally, they are “linear error‑correcting block codes,” but “Hamming Code” is far easier to say. He developed many variants, several of which are still considered mathematically “perfect.”⁴

What Hamming didn’t know was that decades later, physicists would discover that the mathematics he created for telephone lines would reappear in some of the deepest theories about the universe. Hamming’s codes, in other words, impact modern physics. As quantum physics advanced and digital computers evolved into quantum computers using “qubits” instead of “bits,” scientists realized that quantum information—the kind stored in the new qubits—is incredibly fragile. A tiny disturbance can destroy it. To protect it, researchers developed quantum error‑correcting codes, and to their surprise, these codes looked a lot like the ones Hamming invented.⁵

Then came an even stranger discovery. In some theories of the universe—especially those involving black holes and the “holographic principle”—the structure of spacetime itself behaves like an error‑correcting code.⁶ In these models, the universe protects information the same way your phone protects a text message. This raises a profound question. If the universe behaves like a code, could it actually be a code? Some physicists think so. John Wheeler, one of the greats of 20th‑century physics, famously said: “It from bit.”⁷ Meaning: physical things (“it”) arise from information (“bit”).

In this view, the universe isn’t made of matter at the deepest level but made of information. Information structured by mathematical rules. Now enters AI into this story. Could AI understand this “Universal Code”? AI is uniquely good at finding patterns humans miss. It can sift through enormous mathematical spaces, detect hidden symmetries, and propose new structures. Already, AI has discovered new mathematical conjectures, found new materials, solved protein‑folding problems, and helped physicists analyze quantum systems.⁸ If the universe does have a deep informational structure—something like a code—AI might be the first tool capable of recognizing it.

But it’s important to be clear about what AI cannot do. AI cannot access a cosmic database however much we wish. Thus it cannot read hidden information fields. And it cannot compute using anything outside the physical hardware it runs on. AI can only work with the laws and data available to it. But within those limits, it might uncover patterns that humans have overlooked for centuries.

Quantum computers add fuel to this curiosity. A qubit can be 0 and 1 at the same time. Multiple qubits can become entangled, acting like a single system even when far apart. And quantum algorithms can explore many possibilities simultaneously. To some people, this feels like the machine must be “connecting” to something beyond itself—a universal information field or hidden layer of reality. But the scientific explanation is simpler: Quantum computers exploit the rules of quantum mechanics, not an external source of information. They don’t reach outside the universe. They operate within the universe’s laws—laws that may themselves be deeply informational.

In conclusion, the big picture looks to be that when you put all this together, you get a surprisingly elegant idea. And that is the universe may be built on information at its core. Yes, AI might one day help us understand that structure more deeply. But this doesn’t mean the universe is literally a computer. It does however suggest that information is woven into the foundation of everything, from particles to spacetime. And if there is a deeper code beneath reality, AI may be the first tool capable of seeing its outlines.

Thanks to Dr. Richard Hamming, a scientific ledged from decades ago, we at least now understand the math.

Footnotes

Although best known as a mathematician, Hamming’s early interests leaned toward engineering. Born in Chicago in 1911, he grew up fascinated with the engineering profession. But during the Great Depression, financial hardship limited his options, and the only scholarship available to him came from the University of Chicago, which had no engineering school. He therefore majored in mathematics, earning his B.S. in 1937—a circumstance he later regarded as a fortunate twist of fate.

He often remarked, “As an engineer, I would have been the guy going down manholes instead of having the excitement of frontier research work.” He went on to earn a master’s degree at the University of Nebraska in 1939 and completed his doctoral dissertation at the University of Illinois on linear equations. He then joined the faculty there as a mathematics instructor.

By 1945, like many of the country’s brightest minds, Dr. Hamming found himself working on the Manhattan Project. He served in Hans Bethe’s division at Los Alamos, programming early IBM calculating machines used to process endless physics equations. His wife, Wanda Little, also worked with Bethe and later with Edward Teller. She served as a “human computer,” a term given to assistants—usually women during the war—who calculated complex mathematical equations by hand or slide rule.

Dr. Hamming remained at Los Alamos until 1946, when he left to take a position at Bell Telephone Laboratories in New Jersey. Preparing for the cross‑country trip, he purchased a car from a Los Alamos colleague, Klaus Fuchs. To Hamming’s astonishment and horror, he learned only weeks later—via a very inquisitive FBI agent—that his friend had become the centerpiece of one of the most significant espionage cases in history.

Hamming’s time on the Manhattan Project profoundly shaped his thinking. He came to appreciate the power of computing and, in particular, the future importance of computer simulations. He realized—well before most—that simulations could accomplish what would often be impossible to replicate in a laboratory; and William Aspray, John von Neumann and the Origins of Modern Computing (Cambridge, MA: MIT Press, 1990), 112–115.

John W. Tukey, “The Teaching of Concrete Mathematics,” American Mathematical Monthly 70, no. 3 (1963): 350.

Richard W. Hamming, “Error Detecting and Error Correcting Codes,” Bell System Technical Journal 29, no. 2 (1950): 147–160.

F. Jessie MacWilliams and Neil J. A. Sloane, The Theory of Error‑Correcting Codes (Amsterdam: North‑Holland, 1977), 45–48.

Peter W. Shor, “Scheme for Reducing Decoherence in Quantum Computer Memory,” Physical Review A 52, no. 4 (1995): R2493–R2496.

Ahmed Almheiri et al., “Bulk Locality and Quantum Error Correction in AdS/CFT,” Journal of High Energy Physics 2015, no. 2 (2015): 1–34.

John Archibald Wheeler, “Information, Physics, Quantum: The Search for Links,” in Complexity, Entropy, and the Physics of Information, ed. W. H. Zurek (Redwood City, CA: Addison‑Wesley, 1990), 3–28.

Kevin K. Yang et al., “Machine Learning for Protein Engineering,” Nature Methods 16 (2019): 687–694.

“He taught the future to correct its own errors, leaving a lattice of insight that still holds up the weight of modern science.”

Art and poetry by James Hall.