The AI Revolution

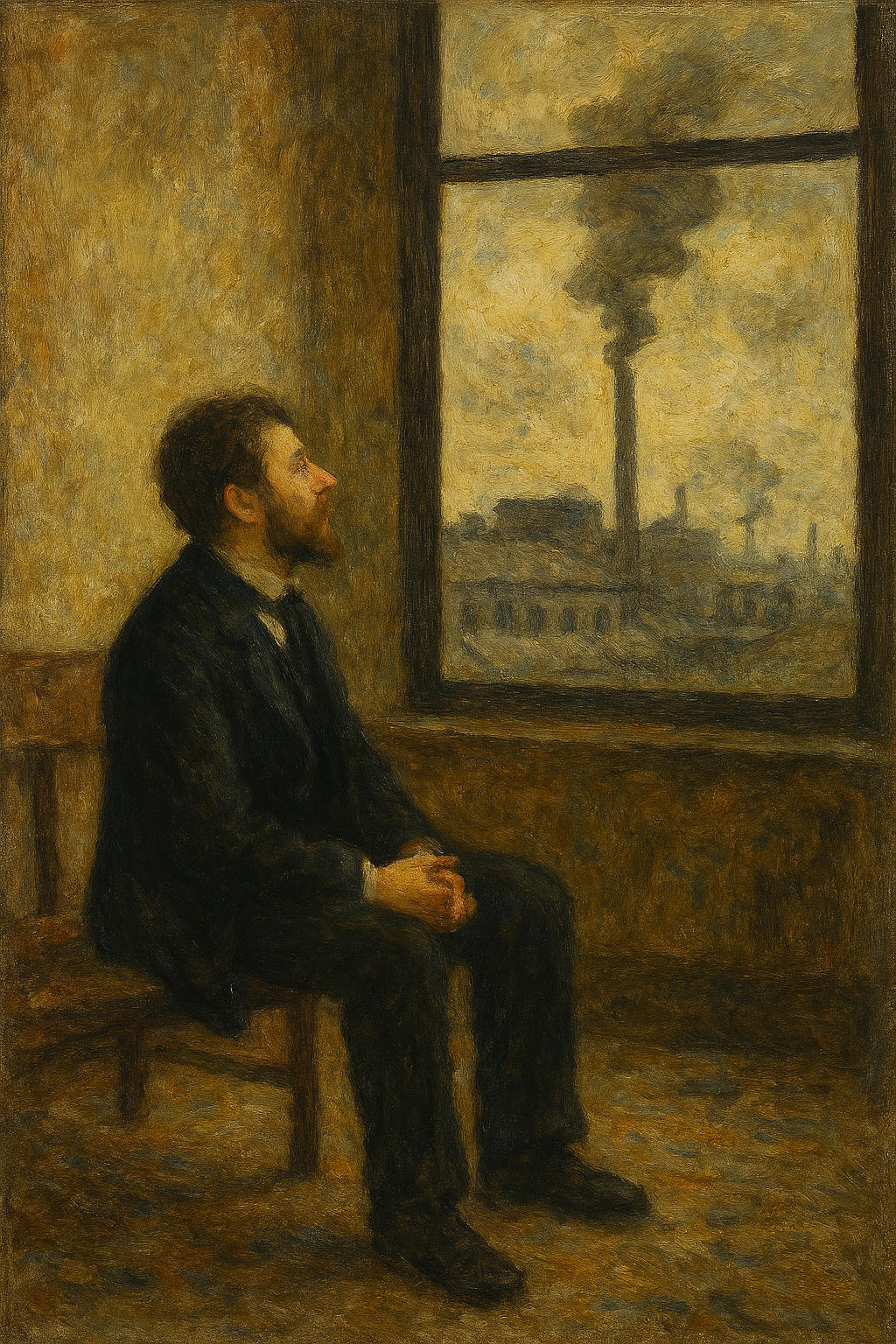

“A presence that mimics voice and vision, yet carries no soul within. A ghost wandering the new industrial sublime.”

Édouard Manet (1832–1883) painted the first shocks of modern life—the crowds, the speed, the strange new rhythms of the 19th century. Not just observing modernity — revealing its psychological jolt.

(What would he make of the AI Revolution just over a century later?)

Poetry and art by James Hall

Three AI Terms You Need to Know NOW

AI Training, Inference & Subjective Experience

By James Hall at jameshall042999@gmail.com

Training vs. inference isn’t just technical jargon—it’s the fault line running through the entire AI moment. If you understand these ideas, you understand why AI is so groundbreaking.

AI Training

Training is the long apprenticeship. Training is the model’s intensive education—a graduate program for a student who never sleeps. Developers feed it enormous datasets with millions of images, libraries of text, oceans of audio. It guesses, gets corrected, adjusts, and tries again. Like a human, it “learns” by making mistakes.

When an AI model is being trained, it behaves a lot like a student who keeps guessing until it gets better. It makes predictions, gets told how wrong it was, and adjusts its internal parameters to reduce that error next time. This cycle repeats millions or billions of times.

But learning at this scale is costly. Training requires specialized hardware, vast energy, and weeks or months of nonstop computation. By the end, the model has built an internal map of patterns—a structured, statistical understanding of the world.

And here’s the key:

It has no subjective experience.

No inner life. No feeling of learning. No spark of awareness behind the patterns.

It’s powerful, but it’s not a mind.

Inference

Inference is the moment It meets YOU! Inference is the Doing Phase. It is the moment the model steps out of the classroom and into the world. This is when you interact with it by asking a chatbot a question, generating an image, unlocking your phone with your face.

During inference, the model isn’t learning.

It isn’t growing.

It isn’t forming memories or opinions.

It’s simply applying what it already knows—rapidly, efficiently, almost magically. Your phone can run inference instantly because the heavy lifting happened long ago during training. It’s the difference between teaching someone how to recognize a face and simply asking them to identify one.

Subjective Experience

Is AI intelligence alone enough to create subjective experience?

“Subjective experience” is the line between a system that processes information and an intelligence that creates with self‑inspiration. It’s the felt sense of being—the inner movie of consciousness, emotion, sensation, awareness. It’s what it’s like to be you.

It’s a fair question. If we could define “subjective experience” more deeply, we’d be closer to understanding whether AI could ever possess anything like conscious creativity.

Modern AI makes this even trickier. It can absorb enormous amounts of information, synthesize it, and respond in ways that feel personal, creative, or even introspective. To many, that looks like intelligence expressed in a deeply subjective way.

AI undeniably represents intelligence. It is already far more than a glorified calculator. In 2026, experts predict AI will exceed human intelligence and then begin to evolve itself exponentially. It can already write and refine its own code.

But here’s the catch: our only window into anyone’s inner experience—human or machine — is behavior and self‑report. When an AI says, “I understand how you feel,” it can pass that behavioral test as convincingly as a person. That leaves us wondering: are we simply projecting consciousness onto a sophisticated tool (Anthropomorphism*), or are we glimpsing the first signs of a new kind of sentience?

So what’s the answer?

There is still no scientific or philosophical consensus. This remains the defining question of the field.

Intelligence—especially the kind we see in today’s AI (and the more advanced AGI systems on the horizon)—can certainly trigger the suspicion of subjective experience. But suspicion is not proof. Many researchers argue that complex information processing (intelligence) and felt experience (consciousness) belong to fundamentally different categories.

That gap between doing and feeling is what’s known as the “hard problem of consciousness.”

And that raises another pressing question:

Are our brains — shaped by slow, linear evolution — prepared for the rapid, exponential acceleration AI is bringing into every facet of life, whether we are workers, thinkers, or creators?

For now, AI is an enabler—a powerful tool.

Tomorrow, however, we approach the “singularity,” a term that simply means uncharted and unpredictable territory.

So where does subjective experience fit in?

This is the question people keep circling:

“Does it understand me.”

“Does it feel anything.”

“Does it have an inner world.”

AI does not have this.

Not during training.

Not during inference.

Not yet.

“Not Yet” is where we stand.

Note:

* Anthropomorphism isn’t just projection—it’s a built‑in reflex from our own evolution. In other words humans are wired to assume there’s a mind behind anything that seems to act or communicate. This is a survival instinct that once made it safer for to mistake a shadow for a predator than the other way around.

Suggested Reading:

Bengio, Yoshua, Ian Goodfellow, and Aaron Courville. Deep Learning. Cambridge, MA: MIT Press, 2016.

A foundational text explaining how neural networks learn, including training and inference.

Chollet, François. Deep Learning with Python. 2nd ed. Shelter Island, NY: Manning Publications, 2021.

A highly accessible introduction to modern AI systems and how they generalize from data.

Dennett, Daniel C. Consciousness Explained. Boston: Little, Brown, 1991.

A philosophical exploration of subjective experience and what it means to “have a mind.”

Hofstadter, Douglas. I Am a Strange Loop. New York: Basic Books, 2007.

A lyrical, mind‑bending look at consciousness, identity, and the nature of inner experience.

LeCun, Yann, Yoshua Bengio, and Geoffrey Hinton. “Deep Learning.” Nature 521, no. 7553 (2015): 436–444.

A landmark paper outlining the breakthroughs that made today’s AI revolution possible.

Nagel, Thomas. “What Is It Like to Be a Bat?” The Philosophical Review 83, no. 4 (1974): 435–450.

The classic essay defining subjective experience — the “what it’s like” of consciousness.

Russell, Stuart, and Peter Norvig. Artificial Intelligence: A Modern Approach. 4th ed. Hoboken, NJ: Pearson, 2021.

The standard reference for understanding how AI systems reason, act, and learn.

Searle, John R. “Minds, Brains, and Programs.” Behavioral and Brain Sciences 3, no. 3 (1980): 417–457.

The famous “Chinese Room” argument about whether machines can truly understand.